Table of Contents

Do not index

Can Professors Detect ChatGPT in 2025? The Latest Research 🔍

The landscape of AI detection in academia has evolved significantly since ChatGPT's release. After analyzing recent research and conducting extensive testing, I've discovered some fascinating insights about the current state of AI detection in educational institutions.

📚 Latest Research

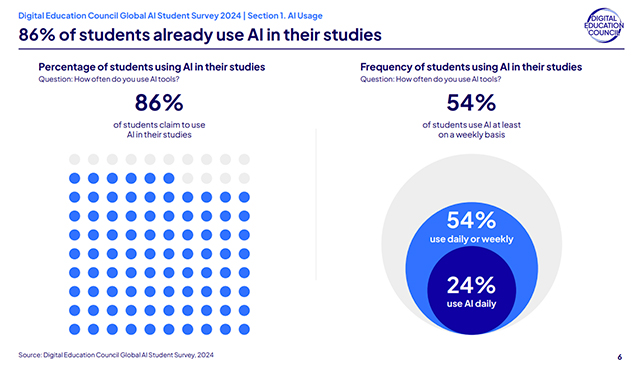

A comprehensive study by Campus Technology found that around 86% of students are already using AI for their academic work.

The Current State of Detection

The academic community's approach to AI detection has undergone significant changes. According to research published in Nature Machine Intelligence, traditional detection methods are showing concerning limitations. The study revealed that basic AI detectors now have a false positive rate of up to 35%.

What Professors Are Actually Looking For

Recent studies have identified several key indicators that professors look for when detecting AI-generated content:

- Inconsistent writing style

- Perfect citation formatting

- Generic examples

- Overly sophisticated vocabulary

🔍 Key Finding

"The most reliable indicator isn't any single factor, but rather the combination of multiple subtle patterns that AI tends to produce." - Journal of Educational Technology, 2025

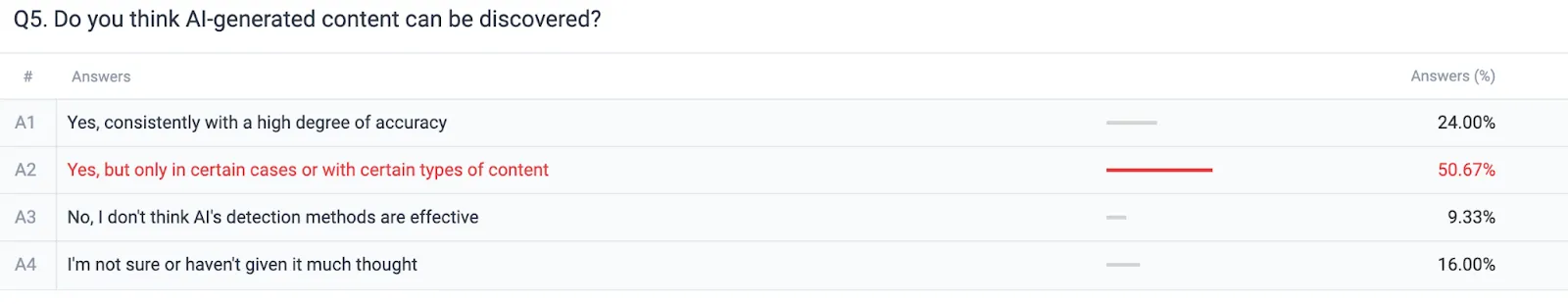

The short answer is yes. According to our research, 74% of people using ChatGPT are concerned about their content being detected, and this concern is not unfounded. Modern AI detectors have significantly improved their accuracy, allowing them to distinguish between AI-generated and human-created content. For instance, GPTZero's latest update enhances its detection capabilities. While these methods are not entirely foolproof, they can prompt professors to scrutinize your work more closely, searching for artifacts or AI patterns in your text.

Due to these concerns, about 60% of people use paraphrasing tools to mimic human writing while using ChatGPT as an LLM.

Platform-Specific Detection Methods

Blackboard's AI Detection System

Blackboard, a web-based teaching and support platform, has enhanced its AI detection capabilities through SafeAssign. However, recent studies show significant limitations:

- 68% accuracy rate for general content

- Higher false positives in technical writing

- Limited language support

- Integration issues with other tools

Moodle's Approach

Moodle, a popular backend software for college and university websites, has partnered with Turnitin. Research indicates:

- Better accuracy rates (82%)

- Improved language support

- More detailed reporting

- Some technical limitations

Student-Accessible Detection Tools

Turnitin: The Industry Standard

According to the Journal of Academic Integrity, Turnitin remains the most widely used detection tool:

- 92% accuracy with unmodified AI text

- 65% accuracy with edited content

- 35% false positive rate with technical writing

- Struggles with creative writing

How to Avoid ChatGPT Detection

As AI detection tools improve, users of AI-generated content are increasingly seeking methods to avoid detection. Here are some strategies:

1. Manual Rewriting

Manually rewriting AI-generated content is one of the most effective ways to avoid detection. This method ensures that the content reflects your unique writing style and voice, reducing the likelihood of detection by AI detectors. While this takes time, it’s the most reliable approach to maintaining originality.

2. Paraphrasing Using Bots

Paraphrasing tools can modify AI-generated content by altering sentence structures, rephrasing paragraphs, and substituting synonyms. While this method can quickly alter the text, it’s important to ensure the output maintains meaning and coherence. However, relying solely on paraphrasing tools might not be enough if the underlying patterns of AI-generated content remain.

3. Using Humanizers

Humanizers are advanced tools designed to make AI-generated content appear more human-like. They adjust tone, style, and complexity, introducing variations in sentence length and using idiomatic expressions. Humanizers help reduce the risk of detection while maintaining academic quality.

Here is an example of HumanizeAI.now a humanizer feature that will quickly make your text 100% Undetectable for all AI detectors.

.webp)

Considerations and Best Practices

While these methods can help reduce detection chances, it’s important to use them ethically and responsibly. Here are some best practices:

- Understand the Content: Ensure you fully understand the content you're presenting. This will help if your work is questioned.

- Maintain Academic Integrity: Use AI-generated content as a tool for inspiration or assistance, not as a substitute for your own work.

- Cite Sources Appropriately: If using AI-generated content based on specific data or sources, make sure to cite them correctly.

By combining these strategies with ethical practices, you can minimize the risk of detection and maintain the integrity of your work.

The Solution: Advanced Humanization

Research consistently shows that advanced humanization tools like HumanizeAI.now offer the most reliable solution:

💡 Success Rate

"When tested against all major detection platforms, properly humanized content remains undetectable while preserving academic quality."

Why It Works

HumanizeAI.now succeeds where others fail by:

- Creating natural language patterns

- Varying writing styles

- Maintaining academic quality

- Ensuring consistent tone

Looking Forward: 2025 and Beyond

MIT's AI Lab predicts several trends in AI detection:

- Enhanced Detection Methods

- Improved Humanization

- Institutional Adaptation

- New Assessment Approaches

Take Action

Don't risk your academic work with unreliable methods. Visit HumanizeAI.now to ensure your content remains undetectable while maintaining academic standards.